A very large DEA project generally involves the expertise from numerous individuals. The concepts and objectives phase requires communication skills to work closely together with the evaluated entities. These are often (but not necessarily) the organisations which are interested in the DEA results. Naturally, the undertaking of a collaborative DEA project increases the complexity of the process. There are also potential benefits, such as a more in depth analyses, additional insights and a broader range of operational characteristics which can be taken into account, by suitably combining the expertises.

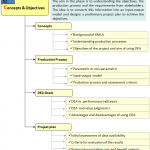

The concepts and objectives phase (systematically presented in Figure 3) aims at defining the research question. Besides determining the objectives of the study this involves determining the operational environment of the observations and the production processes. A clear and a priori agreed definition of the environment can avoid heated discussions in the evaluation of the results (phase 5). Indeed, as DEA measures relative efficiency [i.e., efficiency relative to best practice observations, see Thanassoulis (2001) and Zhu (2003) for a comprehensive introduction on DEA with a software tool], it can easily be argued by observations that they are ‘totally’ different from the other observations in the sample and, as such, cannot be compared with them. A clear and sound definition of the research question and the operational environment avoids similar discussions.

Once the research question is defined, the discussion should focus on the most appropriate technique to assess the problem. Different techniques could yield different results. For example, composite indicators summarize the performance on multiple inputs and multiple outputs in one synthetic indicator. This could yield advantages, such as knowing at a single glance the performance, easy to discuss with a general audience and easy to set targets. However, composite indicators also face some drawbacks as reducing the information and the necessity to weight the different sub-indicators (OECD, 2008). Every technique for composite indicators (e.g., DEA, SFA, performance indicators) has its own peculiarities. The different stakeholders should be aware of this in order to avoid again discussion in the evaluation phase (for a discussion on the peculiarities of the techniques see Fried et al., 2008).

Every study balances on the trade-off between an analysis on micro-level or on macro-level. Micro-level studies have the advantage that they (normally) contain more observations and are better comparable to each other. Macro-level observations allow the researcher to overview a broader picture, but contain less observations. Directly connected to this trade-off is the issue on the identification of the appropriate level of decision making, i.e. can the micro (macro)-level act independently?

A final step in the first phase consists of designing the project plan. This should be seen as broad as possible. It, again, aims at avoiding discussion in the evaluation phase. Indeed, empirical applications in general and data-driven approaches as DEA in particular are sometimes sensitive to the provided data. Traditional frontier techniques such as DEA are deterministic techniques (i.e., they do not allow for noise), they may be sensitive to outlying observations (e.g., Simar, 1996). The latter could arise from measurement errors or atypical observations. Banker and Natarajan (2004) supplied statistical tests based on DEA efficiency scores. Therefore, this step should carefully examine the availability of correctly measured data. In addition, once the objectives and the evaluation technique are determined, the stakeholders should agree on the criteria to evaluate the results. For example, will they use a “naming and shaming” approach (i.e., sunshine regulation; Marques, 2006), a “yardstick competition approach” (i.e., using the outcomes to set maximum prices or revenues; Bogetoft, 1997), or will the results only be reported internally, etc.?

Return to COOPER Framework

In this section

by Ali Emrouznejad & Rajiv Banker

William W Cooper

Order at Amazon – Download GAMS codes

Performance Improvement Management Software – PIM-DEA software

Managing Service Productivity : Uses of Frontier Efficiency Methodologies and MCDM for Improving Service Performance, Springer

Order at Amazon

Download | Recommend to Library

LinkedIn | Facebook | Twitter

Big Data for Greater Good, Springer

Performance Measurement with Fuzzy DEA, Springer

Order at Amazon

Order at Amazon

Strategic Performance Management &

Measurement Using DEA. IGI Global, USA.

Order at Amazon